- Technology

- Computing

The portable computing powerhouse is capable of running 120-billion-parameter LLMs, roughly three times larger than GPT-3, without needing to access the internet or the cloud.

When you purchase through links on our site, we may earn an affiliate commission. Here’s how it works.

(Image credit: Tiiny AI)

(Image credit: Tiiny AI)

- Copy link

- X

Get the world’s most fascinating discoveries delivered straight to your inbox.

Become a Member in Seconds

Unlock instant access to exclusive member features.

Contact me with news and offers from other Future brands Receive email from us on behalf of our trusted partners or sponsors By submitting your information you agree to the Terms & Conditions and Privacy Policy and are aged 16 or over.You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Delivered Daily

Daily Newsletter

Sign up for the latest discoveries, groundbreaking research and fascinating breakthroughs that impact you and the wider world direct to your inbox.

Signup +

Once a week

Life's Little Mysteries

Feed your curiosity with an exclusive mystery every week, solved with science and delivered direct to your inbox before it's seen anywhere else.

Signup +

Once a week

How It Works

Sign up to our free science & technology newsletter for your weekly fix of fascinating articles, quick quizzes, amazing images, and more

Signup +

Delivered daily

Space.com Newsletter

Breaking space news, the latest updates on rocket launches, skywatching events and more!

Signup +

Once a month

Watch This Space

Sign up to our monthly entertainment newsletter to keep up with all our coverage of the latest sci-fi and space movies, tv shows, games and books.

Signup +

Once a week

Night Sky This Week

Discover this week's must-see night sky events, moon phases, and stunning astrophotos. Sign up for our skywatching newsletter and explore the universe with us!

Signup +Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Explore An account already exists for this email address, please log in. Subscribe to our newsletterA U.S. startup has developed what it claims is the world’s smallest artificial intelligence (AI) supercomputer. Packed with high-performance hardware and plenty of RAM, company representatives say it can run "Ph.D. intelligence" AI models — despite being compact enough to tuck into your pocket. This means they're capable of autonomous problem solving, abstract reasoning and strategic planning.

The "AI Pocket Lab," as its creators at Tiiny AI have branded the device, is capable of running a complex 120-billion-parameter large language model (LLM) locally, without any reliance on internet connectivity. You would ordinarily need data-center-class infrastructure to run these systems, and it opens up the possibility of local expert-level coding capabilities, document assessment and refinement, or multi-step reasoning.

It's built around a 12-core ARM processor, of the kind commonly found in smartphones, laptops and tablets. Despite its tiny frame — the device measures just 5.59 × 3.15 × 1.00 inches (14.2 × 8 × 2.53 cm) — it packs 80 GB of LPDDR5X RAM. Most current laptops come with between 8 GB and 32 GB RAM, by way of comparison.

You may like-

Microsoft says its newest AI chip Maia 200 is 3 times more powerful than Google's TPU and Amazon's Trainium processor

Microsoft says its newest AI chip Maia 200 is 3 times more powerful than Google's TPU and Amazon's Trainium processor

-

Tapping into new 'probabilistic computing' paradigm can make AI chips use much less power, scientists say

Tapping into new 'probabilistic computing' paradigm can make AI chips use much less power, scientists say

-

Acing this new AI exam — which its creators say is the toughest in the world — might point to the first signs of AGI

Acing this new AI exam — which its creators say is the toughest in the world — might point to the first signs of AGI

A massive 48 GB of the Pocket Lab's RAM is also reserved exclusively for the neural processing unit (NPU), a chip optimized for AI-related computations. Both Intel and AMD have been manufacturing processors for a few years that include dedicated NPUs to handle AI workloads and to meet Microsoft's 40 trillion operations per second (TOPS) threshold to run AI features on Windows 11.

The Pocket Lab qualifies as a supercomputer (rather than a standard mini-PC or workstation) because of its computational power, capable of running workloads — specifically local inference on 100 billion-plus parameter language models — that normally require multi-GPU, data-center-class systems. Current models the device can run include GPT-OSS 120B, large Phi models and high-parameter Llama family models.

This is part of a recent push towards edge computing for AI, in an attempt to reduce some of the power constraints and environmental impact of distributed AI processing.

Pocket power

While it's a far cry from rivaling the world's most powerful supercomputers, the AI Pocket Lab is capable of delivering 190 TOPS of computing power between its NPU and CPU. It represents another step towards miniaturization in the wake of Nvidia's recently announced Project Digits mini PC. While it doesn't pack the same horsepower as the Nvidia project, it's a fraction of the size.

Sign up for the Live Science daily newsletter nowContact me with news and offers from other Future brandsReceive email from us on behalf of our trusted partners or sponsorsBy submitting your information you agree to the Terms & Conditions and Privacy Policy and are aged 16 or over.To pack so much power into such an unassuming chassis, the Tiiny AI team leaned on a number of technologies and optimizations. Key among them was something the company calls TurboSparse — an innovation that allows massive LLMs to run faster on more limited hardware by ensuring a system only calls on the parts of a model that it needs at any given moment. While traditional models use every parameter for each word of processing/output, a TurboSparse model only uses specific parameters per step.

RELATED STORIES—'Quantum AI' algorithms already outpace the fastest supercomputers, study says

—New supercomputing network could lead to AGI, scientists hope, with 1st node coming online within weeks

—China is building a constellation of AI supercomputers in space — and just launched the first pieces

Another important feature is PowerInfer, which allows for heterogeneous scheduling of the device’s CPU, GPU and NPU. This means that each processor is only given the workload that it's most capable of handling, which makes the entire system more efficient overall and reduces power draw. PowerInfer also includes intelligent power management, deciding when full power is necessary and when it's possible to use less, in part by eliminating unnecessary calculations.

The implications of a miniature AI supercomputer go beyond reducing our reliance on environmentally harmful data centers. It's a boon to privacy, with users able to deploy the power of a sophisticated LLM without being connected to the internet and without their data being processed in the cloud by third parties, while enabling AI access in fieldwork situations such as remote research stations, or on ships or aircraft out of connectivity range.

Alan BradleyFreelance contributor

Alan BradleyFreelance contributorAlan is a freelance tech and entertainment journalist who specializes in computers, laptops, and video games. He's previously written for sites like PC Gamer, GamesRadar, and Rolling Stone. If you need advice on tech, or help finding the best tech deals, Alan is your man.

View MoreYou must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Logout Read more Microsoft says its newest AI chip Maia 200 is 3 times more powerful than Google's TPU and Amazon's Trainium processor

Microsoft says its newest AI chip Maia 200 is 3 times more powerful than Google's TPU and Amazon's Trainium processor

Tapping into new 'probabilistic computing' paradigm can make AI chips use much less power, scientists say

Tapping into new 'probabilistic computing' paradigm can make AI chips use much less power, scientists say

Acing this new AI exam — which its creators say is the toughest in the world — might point to the first signs of AGI

Acing this new AI exam — which its creators say is the toughest in the world — might point to the first signs of AGI

'Thermodynamic computer' can mimic AI neural networks — using orders of magnitude less energy to generate images

'Thermodynamic computer' can mimic AI neural networks — using orders of magnitude less energy to generate images

Scientists build 'most accurate' quantum computing chip ever thanks to new silicon-based computing architecture

Scientists build 'most accurate' quantum computing chip ever thanks to new silicon-based computing architecture

MIT's chip stacking breakthrough could cut energy use in power-hungry AI processes

Latest in Computing

MIT's chip stacking breakthrough could cut energy use in power-hungry AI processes

Latest in Computing

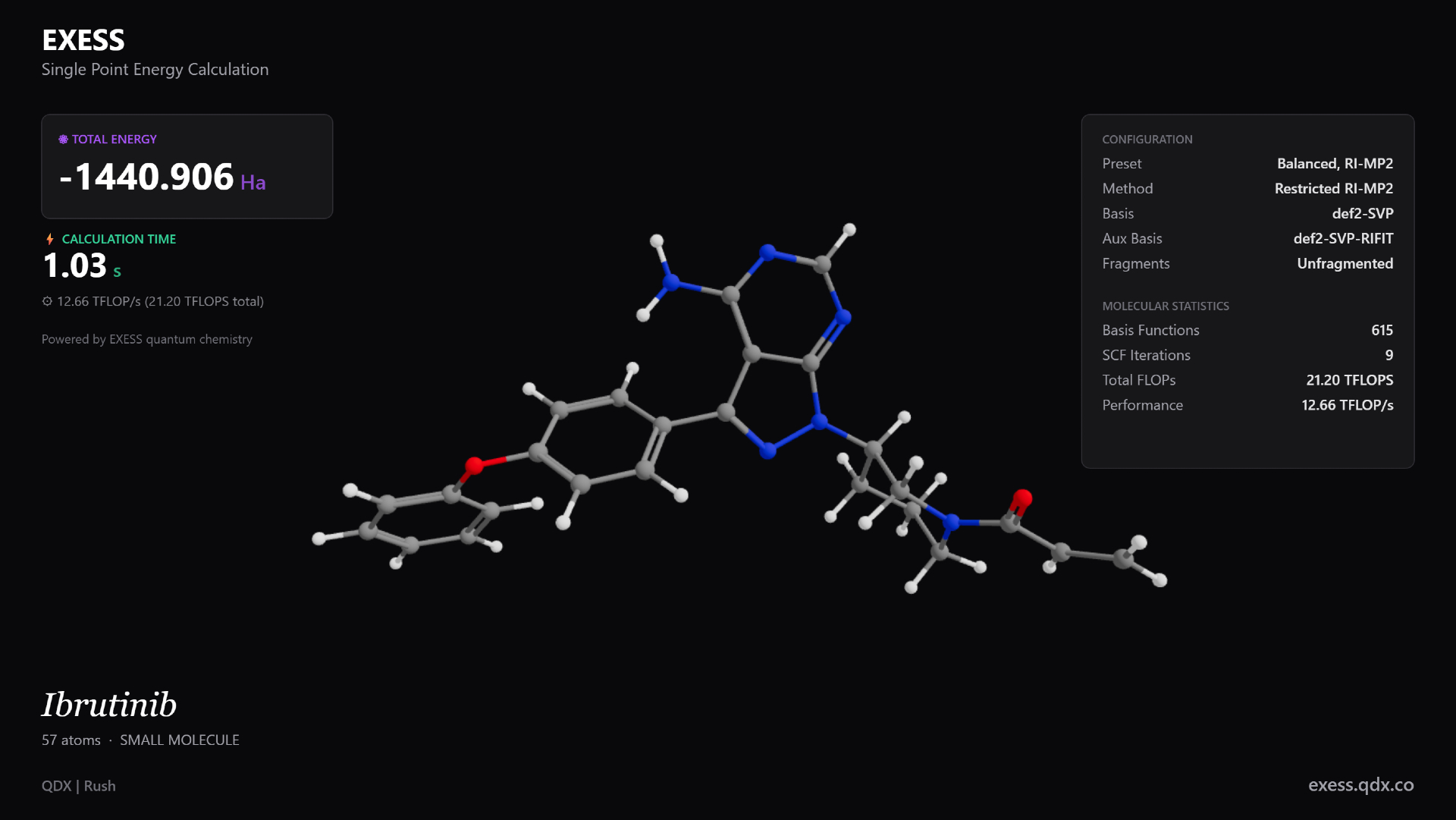

Ultrafast quantum chemistry engine could speed up the development of new medicines and materials

Ultrafast quantum chemistry engine could speed up the development of new medicines and materials

'Thermodynamic computer' can mimic AI neural networks — using orders of magnitude less energy to generate images

'Thermodynamic computer' can mimic AI neural networks — using orders of magnitude less energy to generate images

Microsoft can now store data for 10,000 years on everyday glass thanks to laser breakthrough

Microsoft can now store data for 10,000 years on everyday glass thanks to laser breakthrough

MIT designs computing component that uses waste heat 'as a form of information'

MIT designs computing component that uses waste heat 'as a form of information'

Google Glass has found yet another lease of life — but is it too little too late for smart glasses?

Google Glass has found yet another lease of life — but is it too little too late for smart glasses?

Tapping into new 'probabilistic computing' paradigm can make AI chips use much less power, scientists say

Latest in News

Tapping into new 'probabilistic computing' paradigm can make AI chips use much less power, scientists say

Latest in News

'City killer' asteroid will narrowly miss the moon, James Webb Telescope reveals

'City killer' asteroid will narrowly miss the moon, James Webb Telescope reveals

Groundbreaking new drug shows promise for treating children with a devastating form of epilepsy

Groundbreaking new drug shows promise for treating children with a devastating form of epilepsy

Scientists taught robots to swim through mazes using Einstein's relativity

Scientists taught robots to swim through mazes using Einstein's relativity

The sword in the sea: How one lucky graduate student found his second Crusader sword while taking a swim off Israel's coast

The sword in the sea: How one lucky graduate student found his second Crusader sword while taking a swim off Israel's coast

Climate disasters caused societal upheaval 3,000 years ago in China, study of 'oracle bones' hints

Climate disasters caused societal upheaval 3,000 years ago in China, study of 'oracle bones' hints

'Truly extraordinary': Mega-laser shooting at us from halfway across the universe is the brightest 'cosmic beacon' we've ever seen

LATEST ARTICLES

'Truly extraordinary': Mega-laser shooting at us from halfway across the universe is the brightest 'cosmic beacon' we've ever seen

LATEST ARTICLES 1'City killer' asteroid will narrowly miss the moon, James Webb Telescope reveals

1'City killer' asteroid will narrowly miss the moon, James Webb Telescope reveals- 2Groundbreaking new drug shows promise for treating children with a devastating form of epilepsy

- 3Scientists taught robots to swim through mazes using Einstein's relativity

- 4The sword in the sea: How one lucky graduate student found his second Crusader sword while taking a swim off Israel's coast

- 5Sodium-ion batteries are getting ready for prime time. How can they improve EVs?