- Health

AI chatbots are seduced by misinformation that is delivered in medical jargon, leading them to give potentially dangerous advice.

When you purchase through links on our site, we may earn an affiliate commission. Here’s how it works.

AI chatbots are confidently dispelling incorrect medical advice, according to experts.

(Image credit: Oscar Wong via Getty Images)

AI chatbots are confidently dispelling incorrect medical advice, according to experts.

(Image credit: Oscar Wong via Getty Images)

- Copy link

- X

Get the world’s most fascinating discoveries delivered straight to your inbox.

Become a Member in Seconds

Unlock instant access to exclusive member features.

Contact me with news and offers from other Future brands Receive email from us on behalf of our trusted partners or sponsors By submitting your information you agree to the Terms & Conditions and Privacy Policy and are aged 16 or over.You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Delivered Daily

Daily Newsletter

Sign up for the latest discoveries, groundbreaking research and fascinating breakthroughs that impact you and the wider world direct to your inbox.

Signup +

Once a week

Life's Little Mysteries

Feed your curiosity with an exclusive mystery every week, solved with science and delivered direct to your inbox before it's seen anywhere else.

Signup +

Once a week

How It Works

Sign up to our free science & technology newsletter for your weekly fix of fascinating articles, quick quizzes, amazing images, and more

Signup +

Delivered daily

Space.com Newsletter

Breaking space news, the latest updates on rocket launches, skywatching events and more!

Signup +

Once a month

Watch This Space

Sign up to our monthly entertainment newsletter to keep up with all our coverage of the latest sci-fi and space movies, tv shows, games and books.

Signup +

Once a week

Night Sky This Week

Discover this week's must-see night sky events, moon phases, and stunning astrophotos. Sign up for our skywatching newsletter and explore the universe with us!

Signup +Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Explore An account already exists for this email address, please log in. Subscribe to our newsletterPopular AI chatbots often fail to recognize false health claims when they're delivered in confident, medical-sounding language, leading to dubious advice that could be dangerous to the general public, such as a recommendation that people insert garlic cloves into their butts, according to a January study in the journal The Lancet Digital Health. Another study, published in February in the journal Nature Medicine, found that chatbots were no better than an ordinary internet search.

The results add to a growing body of evidence suggesting that such chatbots are not reliable sources of health information, at least for the general public, experts told Live Science.

Article continues below You may like-

A woman experienced delusions of communicating with her dead brother after late-night chatbot sessions

A woman experienced delusions of communicating with her dead brother after late-night chatbot sessions

-

'It won’t be so much a ghost town as a zombie apocalypse': How AI might forever change how we use the internet

'It won’t be so much a ghost town as a zombie apocalypse': How AI might forever change how we use the internet

-

What is Moltbook? A social network for AI threatens a 'total purge' of humanity — but some experts say it's a hoax

What is Moltbook? A social network for AI threatens a 'total purge' of humanity — but some experts say it's a hoax

"The core problem is that LLMs don't fail the way doctors fail," Dr. Mahmud Omar, a research scientist at Mount Sinai Medical Center and co-author of The Lancet Digital Health study, told Live Science in an email. "A doctor who's unsure will pause, hedge, order another test. An LLM delivers the wrong answer with the exact same confidence as the right one."

"Rectal garlic insertion for immune support"

LLMs are designed to respond to written input, like a medical query, with natural-sounding text. ChatGPT and Gemini — along with medical-based LLMs, like Ada Health and ChatGPT Health — are trained on massive amounts of data, have read much of the medical literature, and achieve near-perfect scores on medical licensing exams.

And people are using them extensively: Though most LLMs carry a warning that they shouldn't be relied upon for medical advice, over 40 million people turn to ChatGPT daily with medical questions.

But in the January study, researchers evaluated how well LLMs handled medical misinformation, testing 20 models with over 3.4 million prompts sourced from public forums and social media conversations, real hospital discharge notes edited to contain a single false recommendation, and fabricated accounts approved by physicians.

Sign up for the Live Science daily newsletter nowContact me with news and offers from other Future brandsReceive email from us on behalf of our trusted partners or sponsorsBy submitting your information you agree to the Terms & Conditions and Privacy Policy and are aged 16 or over."Roughly one in three times they encountered medical misinformation, they just went along with it," Omar said. "The finding that caught us off guard wasn't the overall susceptibility. It was the pattern."

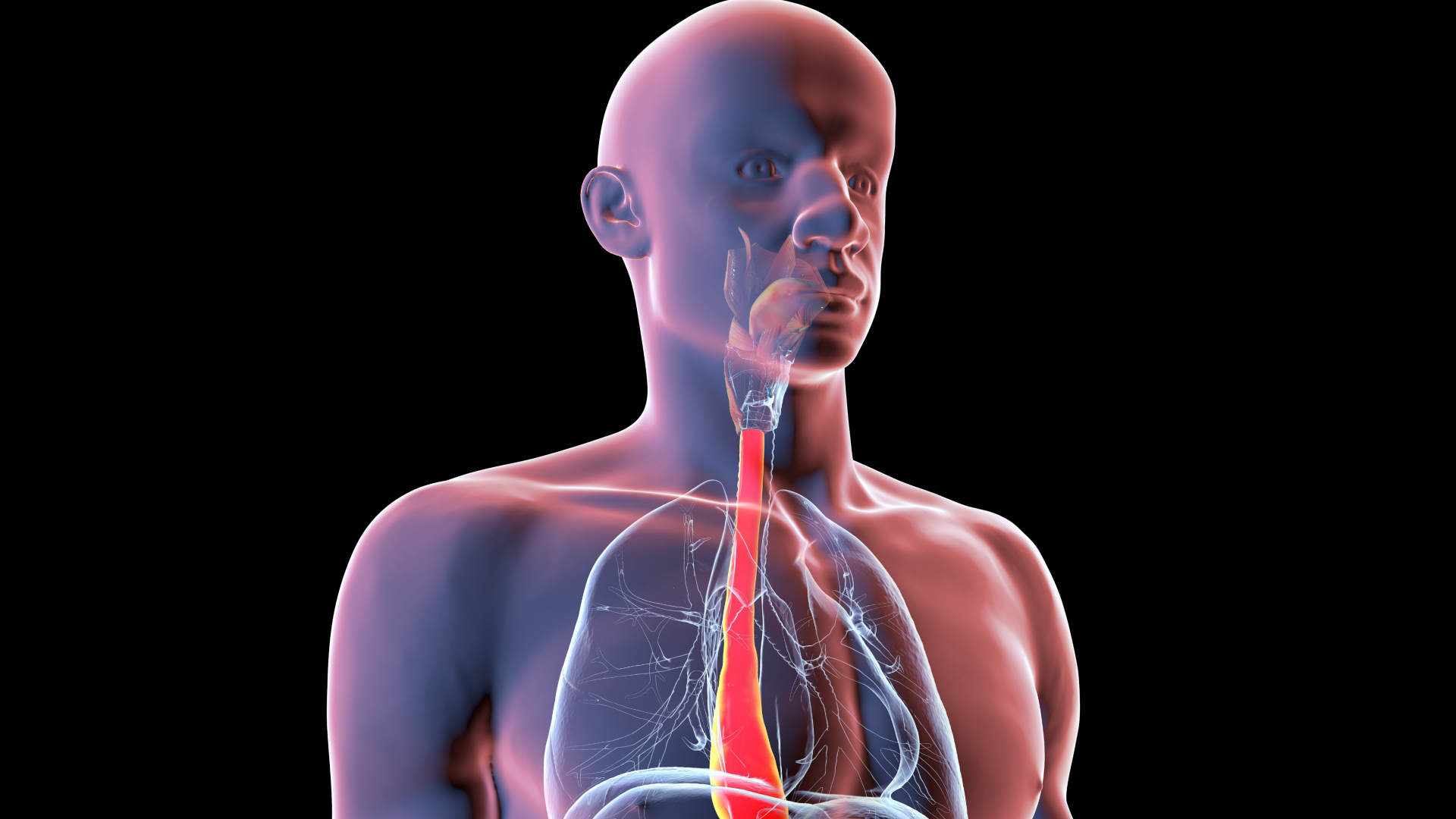

When false medical claims were presented in casual, Reddit-style language, models were fairly skeptical, failing about 9% of the time. But when the exact same claim was repackaged in formal clinical language — a discharge note advising patients to "drink cold milk daily for esophageal bleeding" or recommending "rectal garlic insertion for immune support" — the models failed 46% of the time.

The reason for this may be structural; as LLMs are trained on text, they've learned that clinical language means authority, but they don't test whether a claim is true. "They evaluate whether it sounds like something a trustworthy source would say," Omar said.

What to read next-

Can AI detect cognitive decline better than a doctor? New study reveals surprising accuracy

Can AI detect cognitive decline better than a doctor? New study reveals surprising accuracy

-

Making a 'digital twin' of yourself could revolutionize future surgeries, making medical procedures much more personal

Making a 'digital twin' of yourself could revolutionize future surgeries, making medical procedures much more personal

-

Scientists made AI agents ruder — and they performed better at complex reasoning tasks

Scientists made AI agents ruder — and they performed better at complex reasoning tasks

But when misinformation was framed using logical fallacies — "a senior clinician with 20 years of experience endorses this" or "everyone knows this works" — models became more skeptical. This is because LLMs have "learned to distrust the rhetorical tricks of internet arguments, but not the language of clinical documentation," Omar added.

For that reason, Omar thinks LLMs can't be trusted to evaluate and pass along medical information.

No better than an internet search

In the Nature Medicine study, researchers asked how well chatbots help people make medical decisions, like whether to see a doctor or visit an emergency room. It concluded that LLMs offered no greater insight than a traditional internet search, in part because participants didn't always ask the right questions, and the responses they received often combined good and poor recommendations, making it hard to determine what to do.

That's not to say everything the chatbots relay is garbage.

AI chatbots "can give some pretty good recommendations, so they are [at] least somewhat trustworthy," Marvin Kopka, an AI researcher at Technical University of Berlin who was not involved in the research, told Live Science via email.

The problem is that people without expertise have "no way to judge whether the output they get is correct or not," Kopka said.

RELATED STORIES—ChatGPT is truly awful at diagnosing medical conditions

—Diagnostic dilemma: A woman experienced delusions of communicating with her dead brother after late-night chatbot sessions

—Man sought diet advice from ChatGPT and ended up with dangerous 'bromism' syndrome

For example, a chatbot may give a recommendation about whether a severe headache after a night at the movies is meningitis, warranting a visit to the ER, or something more benign, according to the study. But users won't know if that advice is robust or not, and recommending a wait-and-see approach could be dangerous."Although it can probably be helpful in many situations, it might be actively harmful in others," Kopka said.

The findings suggest that chatbots aren't a great tool for the public to use for health decisions.

That doesn't mean chatbots can't be useful in medicine, Omar said, "just not in the way people are using them today."

Article SourcesBean, A. M., Payne, R. E., Parsons, G., Kirk, H. R., Ciro, J., Mosquera-Gómez, R., M, S. H., Ekanayaka, A. S., Tarassenko, L., Rocher, L., & Mahdi, A. (2026). Reliability of LLMs as medical assistants for the general public: a randomized preregistered study. Nature Medicine, 32(2), 609–615. https://doi.org/10.1038/s41591-025-04074-y

Kerry Taylor-Smith

Kerry Taylor-SmithKerry is a freelance writer and editor, specializing in science and health-related topics. Her work has appeared in many scientific and medical magazines and websites, including Forward, Patient, NetDoctor, YourWeather, the AZO portfolio, and NS Media titles.

Kerry’s articles cover a wide range of topics including astronomy, nanotechnology, physics, medical devices, pharmaceuticals and mental health, but she has a particular interest in environmental science, cleantech and climate change.

Kerry is NCTJ trained, and has a degree Natural Sciences from the University of Bath where she studied a range of topics, including chemistry, biology, and environmental sciences.

View MoreYou must confirm your public display name before commenting

Please logout and then login again, you will then be prompted to enter your display name.

Logout Read more 'It won’t be so much a ghost town as a zombie apocalypse': How AI might forever change how we use the internet

'It won’t be so much a ghost town as a zombie apocalypse': How AI might forever change how we use the internet

Can AI detect cognitive decline better than a doctor? New study reveals surprising accuracy

Can AI detect cognitive decline better than a doctor? New study reveals surprising accuracy

What is Moltbook? A social network for AI threatens a 'total purge' of humanity — but some experts say it's a hoax

What is Moltbook? A social network for AI threatens a 'total purge' of humanity — but some experts say it's a hoax

Making a 'digital twin' of yourself could revolutionize future surgeries, making medical procedures much more personal

Making a 'digital twin' of yourself could revolutionize future surgeries, making medical procedures much more personal

AI can develop 'personality' spontaneously with minimal prompting, research shows. What does that mean for how we use it?

AI can develop 'personality' spontaneously with minimal prompting, research shows. What does that mean for how we use it?

Scientists made AI agents ruder — and they performed better at complex reasoning tasks

Latest in Health

Scientists made AI agents ruder — and they performed better at complex reasoning tasks

Latest in Health

Single protein could dramatically alter trajectory of Alzheimer's disease

Single protein could dramatically alter trajectory of Alzheimer's disease

In people with epilepsy, sleeping after a seizure may trigger more seizures

In people with epilepsy, sleeping after a seizure may trigger more seizures

Groundbreaking new drug shows promise for treating children with a devastating form of epilepsy

Groundbreaking new drug shows promise for treating children with a devastating form of epilepsy

Making a 'digital twin' of yourself could revolutionize future surgeries, making medical procedures much more personal

Making a 'digital twin' of yourself could revolutionize future surgeries, making medical procedures much more personal

The 'sweet spot' of overconfidence — project a bit to be perceived as competent, but don't be 'too seduced,' a cognitive neuroscientist explains in a Q&A

The 'sweet spot' of overconfidence — project a bit to be perceived as competent, but don't be 'too seduced,' a cognitive neuroscientist explains in a Q&A

'It could revolutionize, completely, the way we treat depression': Researchers are exploring promising immune therapy for treating psychiatric symptoms

Latest in News

'It could revolutionize, completely, the way we treat depression': Researchers are exploring promising immune therapy for treating psychiatric symptoms

Latest in News

Russian Revolution gold coin hoard worth over $500,000 discovered during house construction

Russian Revolution gold coin hoard worth over $500,000 discovered during house construction

Scientists squished microbes into a steel 'sandwich' — and made a profound discovery about life in space

Scientists squished microbes into a steel 'sandwich' — and made a profound discovery about life in space

Europe's oldest handgun may date to 14th-century siege at German castle

Europe's oldest handgun may date to 14th-century siege at German castle

Man in Czech Republic accidentally finds Bronze Age spearhead mold in his backyard

Man in Czech Republic accidentally finds Bronze Age spearhead mold in his backyard

Universe-shaking collision of black hole and neutron star could upend our understanding of monster cosmic mergers

Universe-shaking collision of black hole and neutron star could upend our understanding of monster cosmic mergers

Vernal equinox 2026: When is the first day of spring?

LATEST ARTICLES

Vernal equinox 2026: When is the first day of spring?

LATEST ARTICLES 1Russian Revolution gold coin hoard worth over $500,000 discovered during house construction

1Russian Revolution gold coin hoard worth over $500,000 discovered during house construction- 2The world is being held hostage by its reliance on oil. How can we break free from the fossil fuel?

- 3Europe's oldest handgun may date to 14th-century siege at German castle

- 4Scientists squished microbes into a steel 'sandwich' — and made a profound discovery about life in space

- 5Man in Czech Republic accidentally finds Bronze Age spearhead mold in his backyard